中文 | English

Installation | Run | Screenshot | Architecture | Integration | Compare | Community & Sponsorship | CHANGELOG | Disclaimer

Golang-based distributed web crawler management platform, supporting various languages including Python, NodeJS, Go, Java, PHP and various web crawler frameworks including Scrapy, Puppeteer, Selenium.

Installation

Three methods:

Pre-requisite (Docker)

- Docker 18.03+

- Redis 5.x+

- MongoDB 3.6+

- Docker Compose 1.24+ (optional but recommended)

Pre-requisite (Direct Deploy)

- Go 1.12+

- Node 8.12+

- Redis 5.x+

- MongoDB 3.6+

Quick Start

docker-composedocker-compose.ymlRun

Docker

docker-composedocker-compose.ymlhttp://localhost:8080For Docker Deployment details, please refer to relevant documentation.

Screenshot

Login

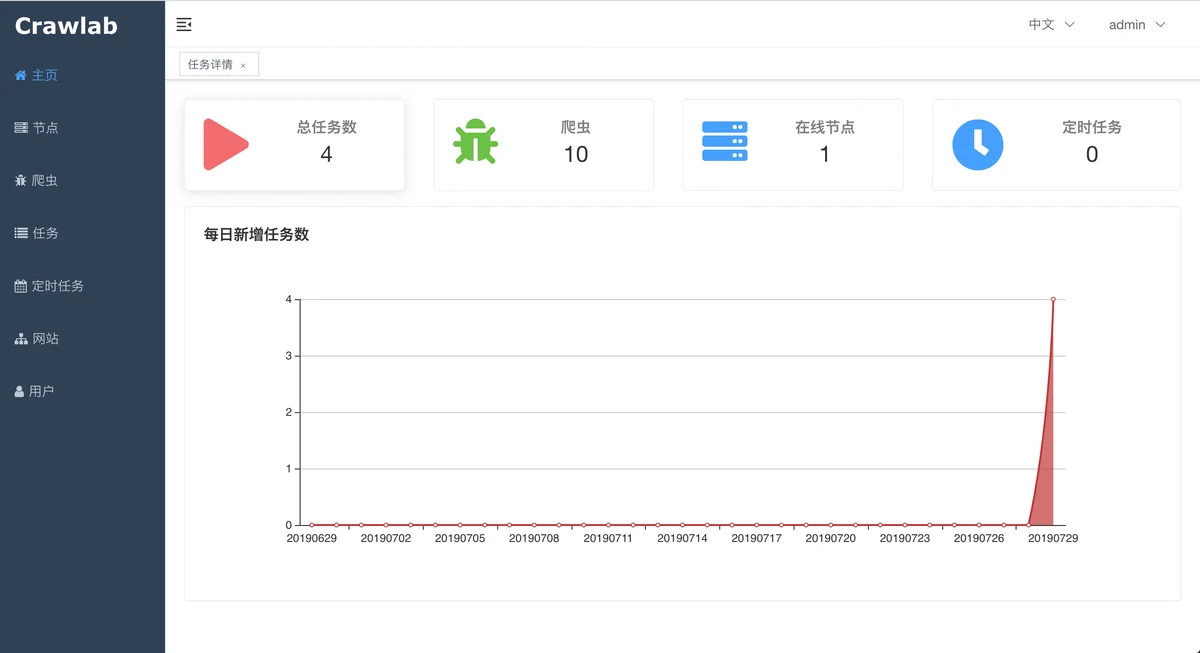

Home Page

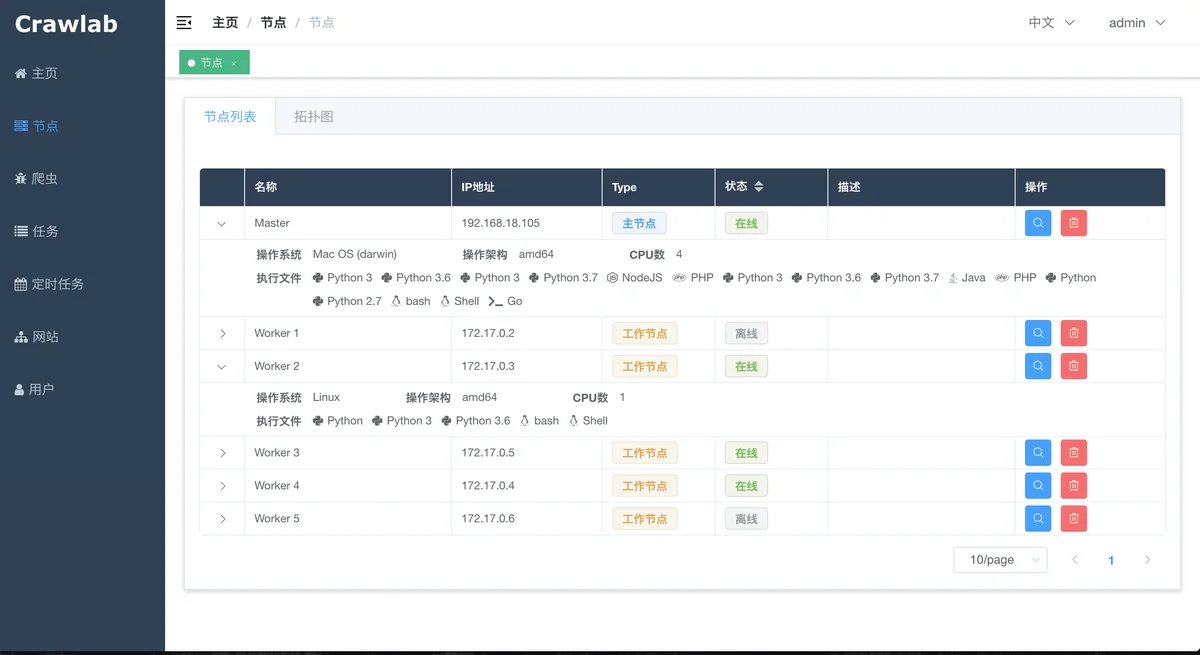

Node List

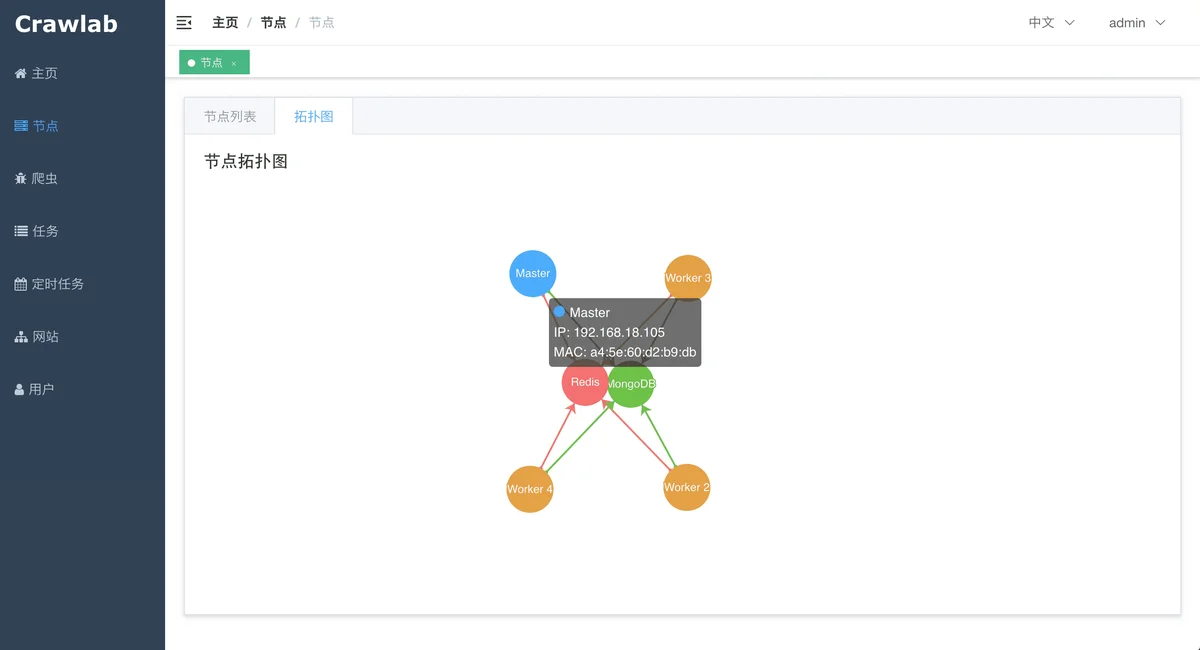

Node Network

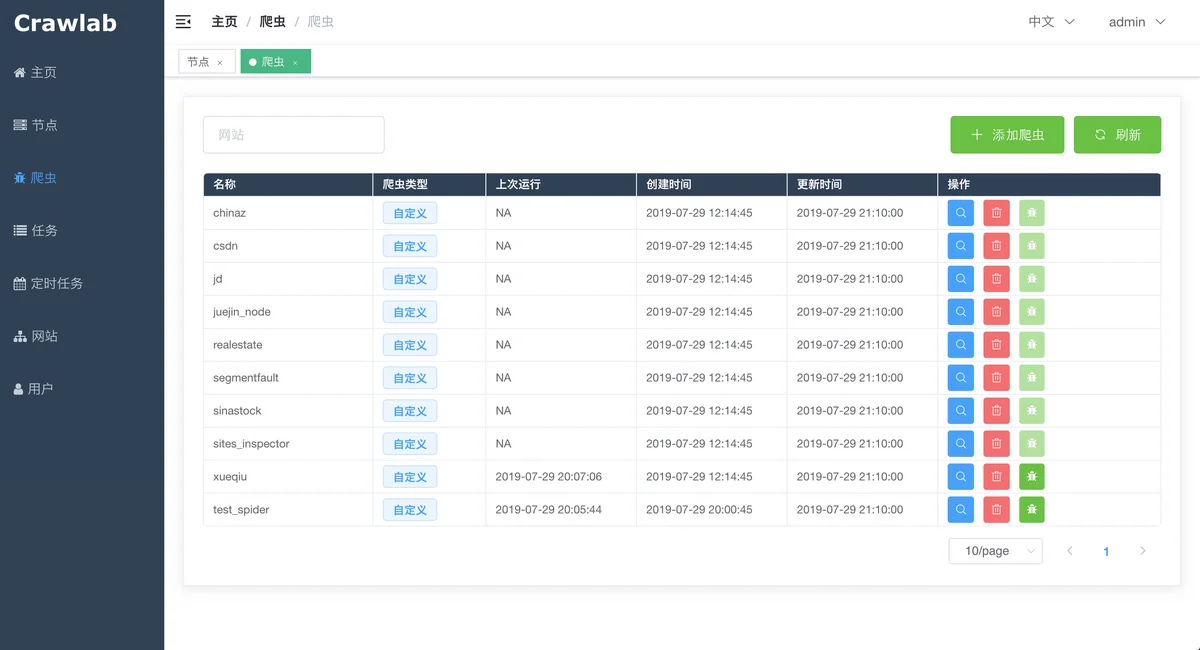

Spider List

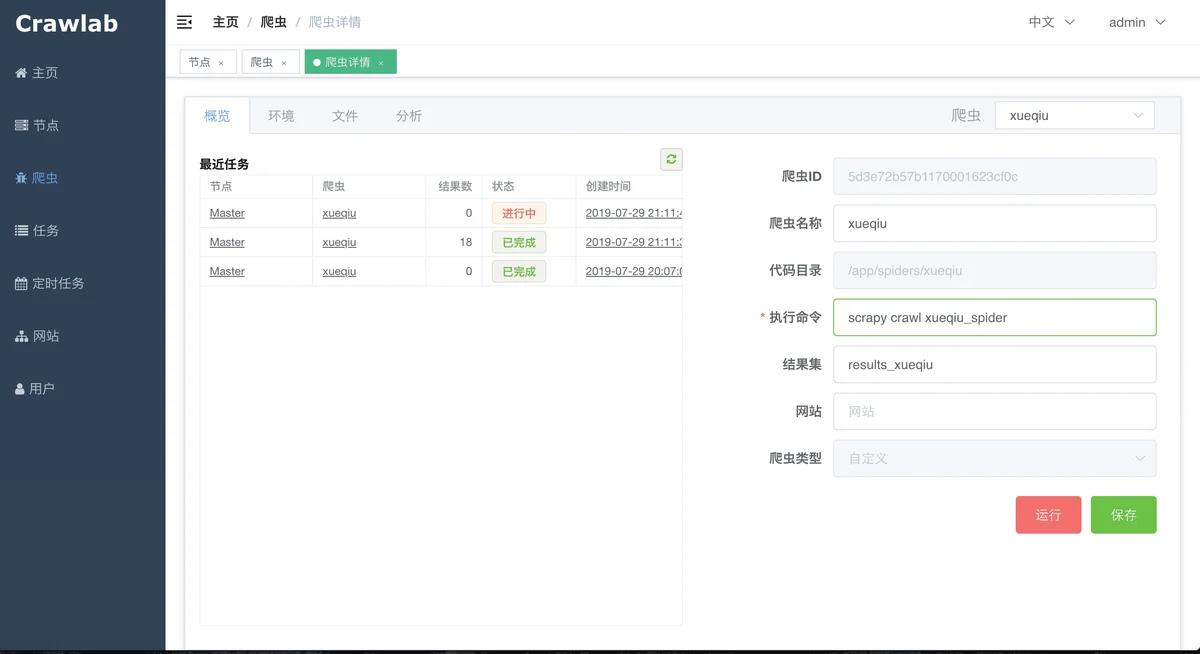

Spider Overview

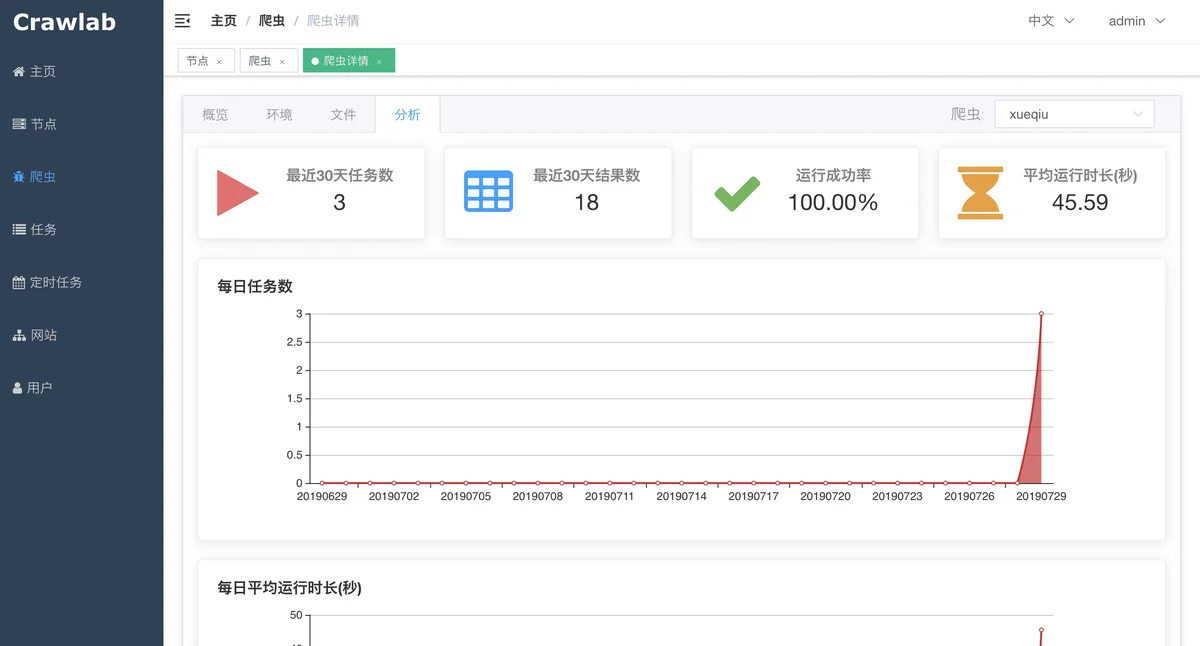

Spider Analytics

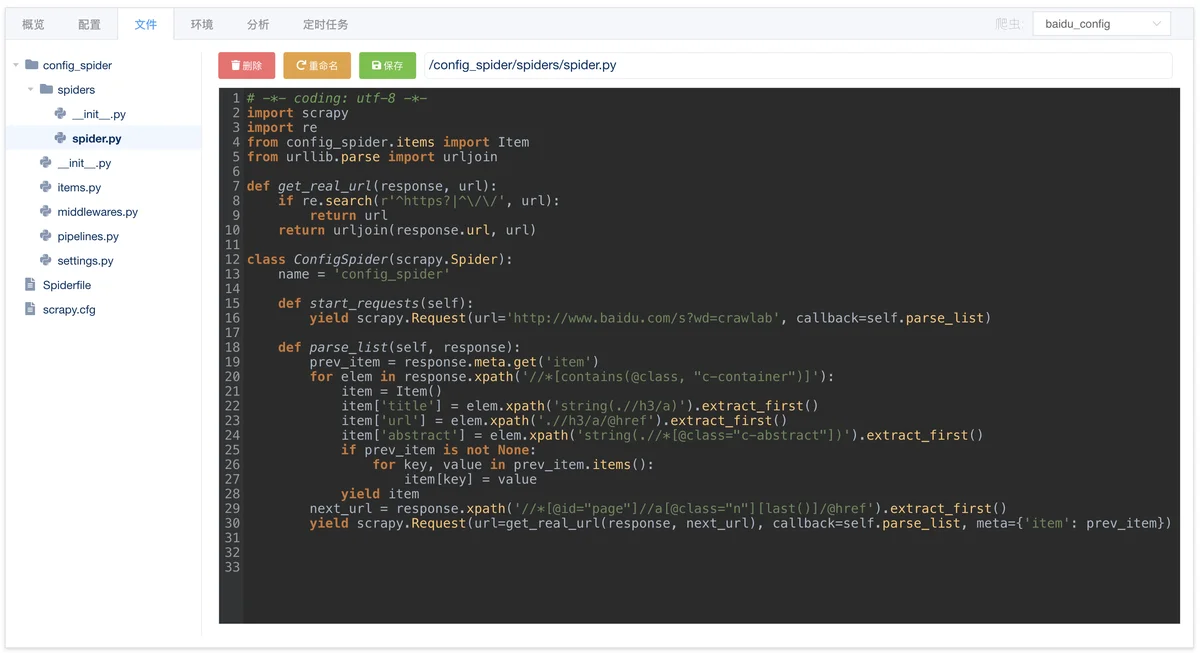

Spider File Edit

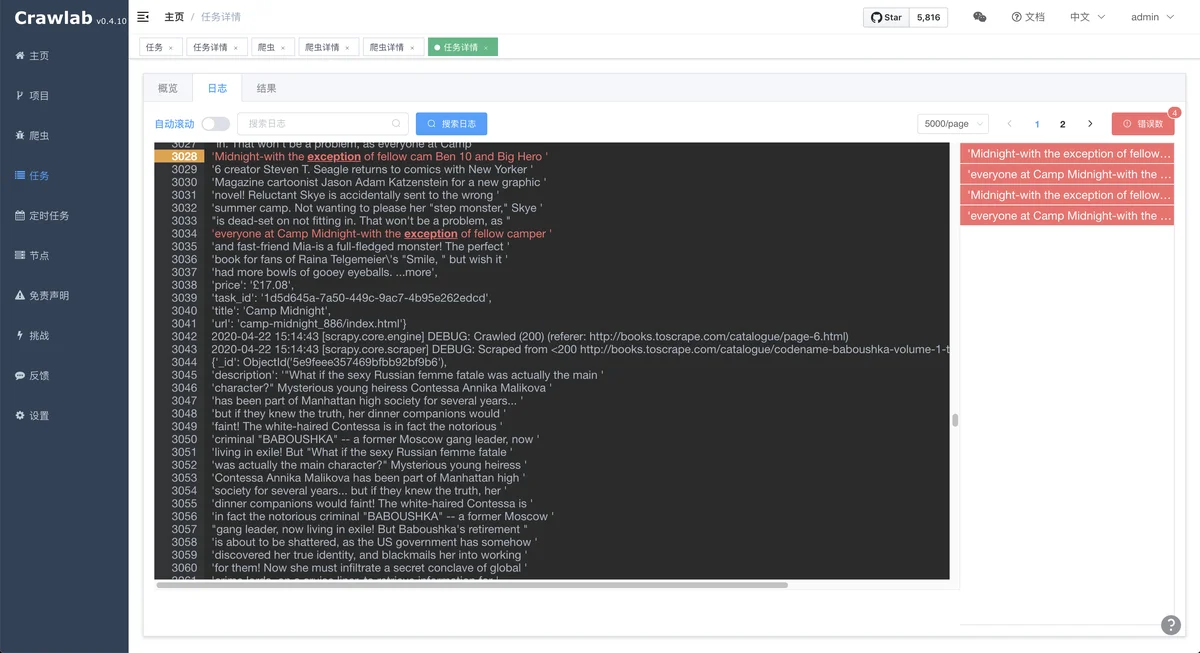

Task Log

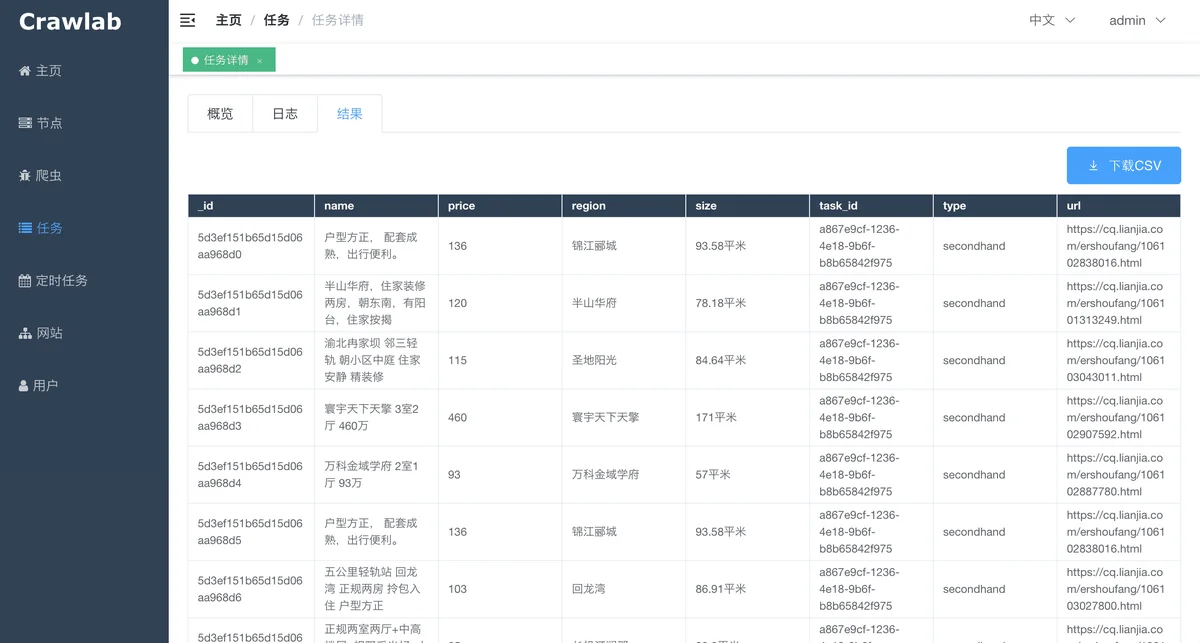

Task Results

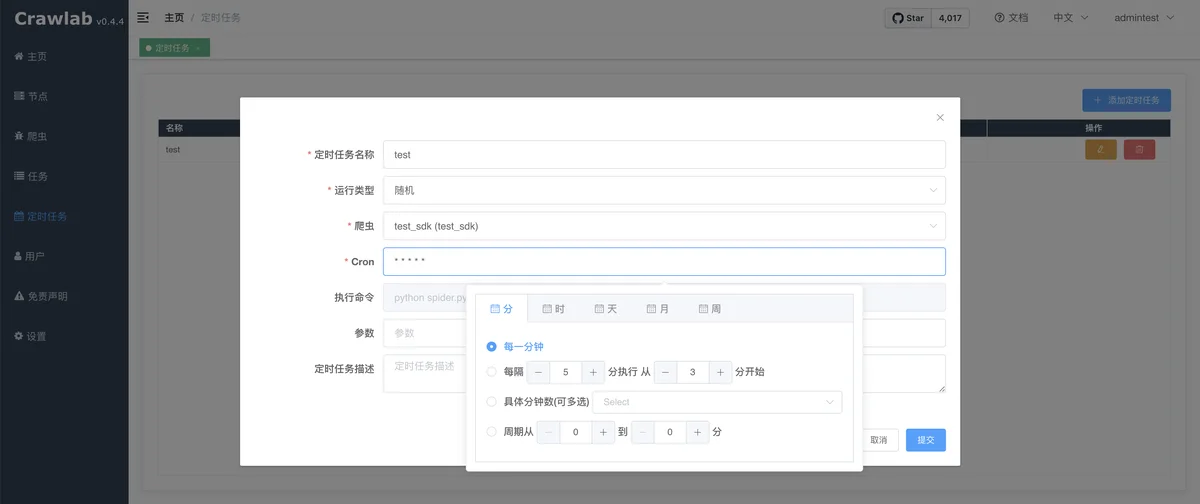

Cron Job

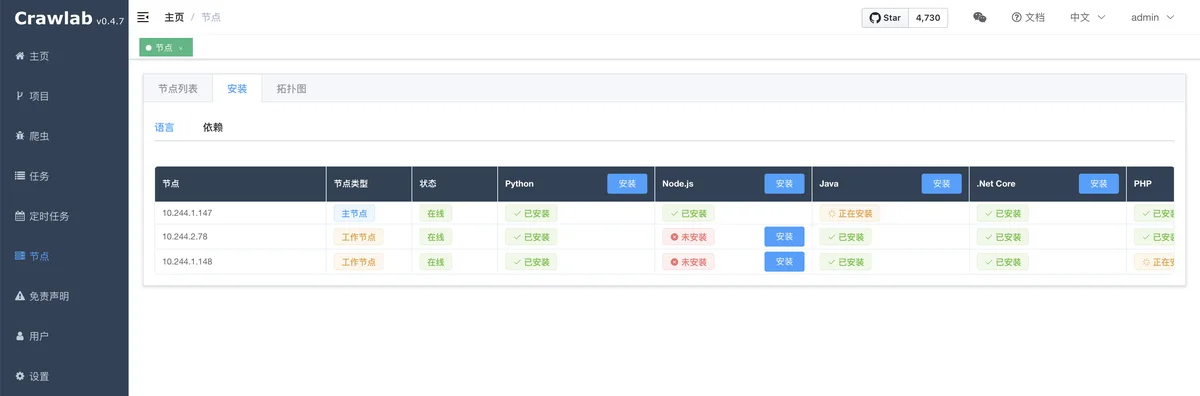

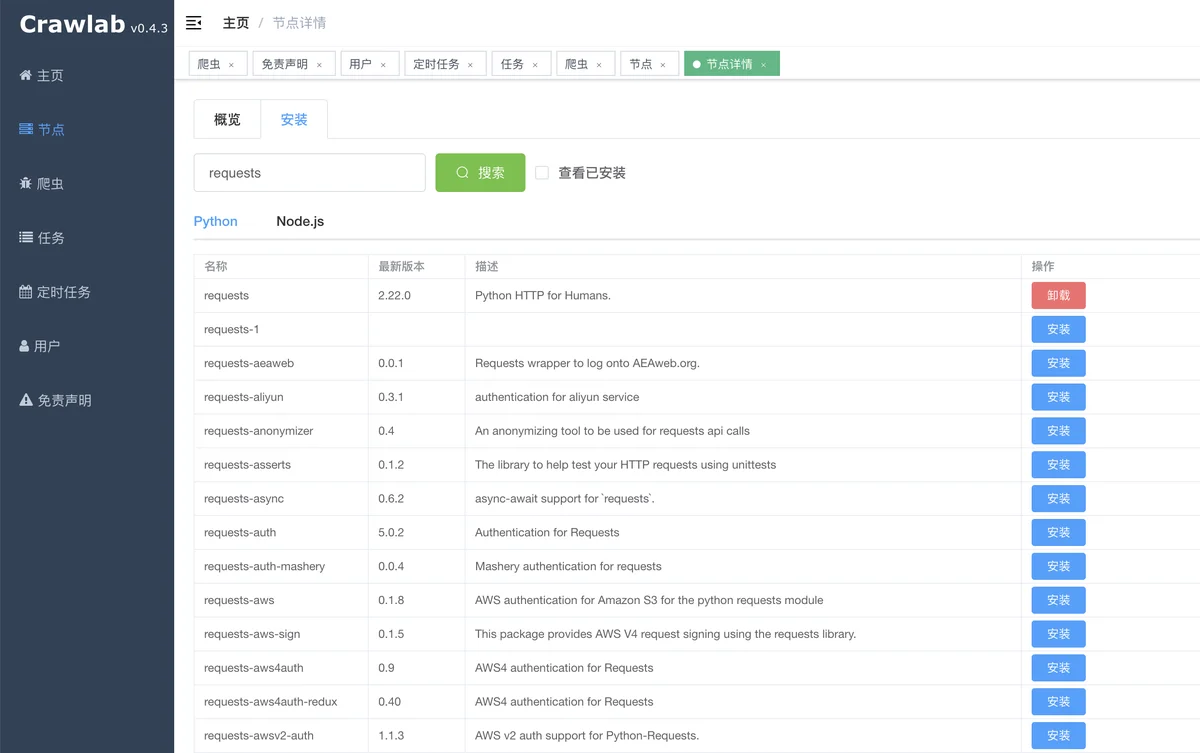

Language Installation

Dependency Installation

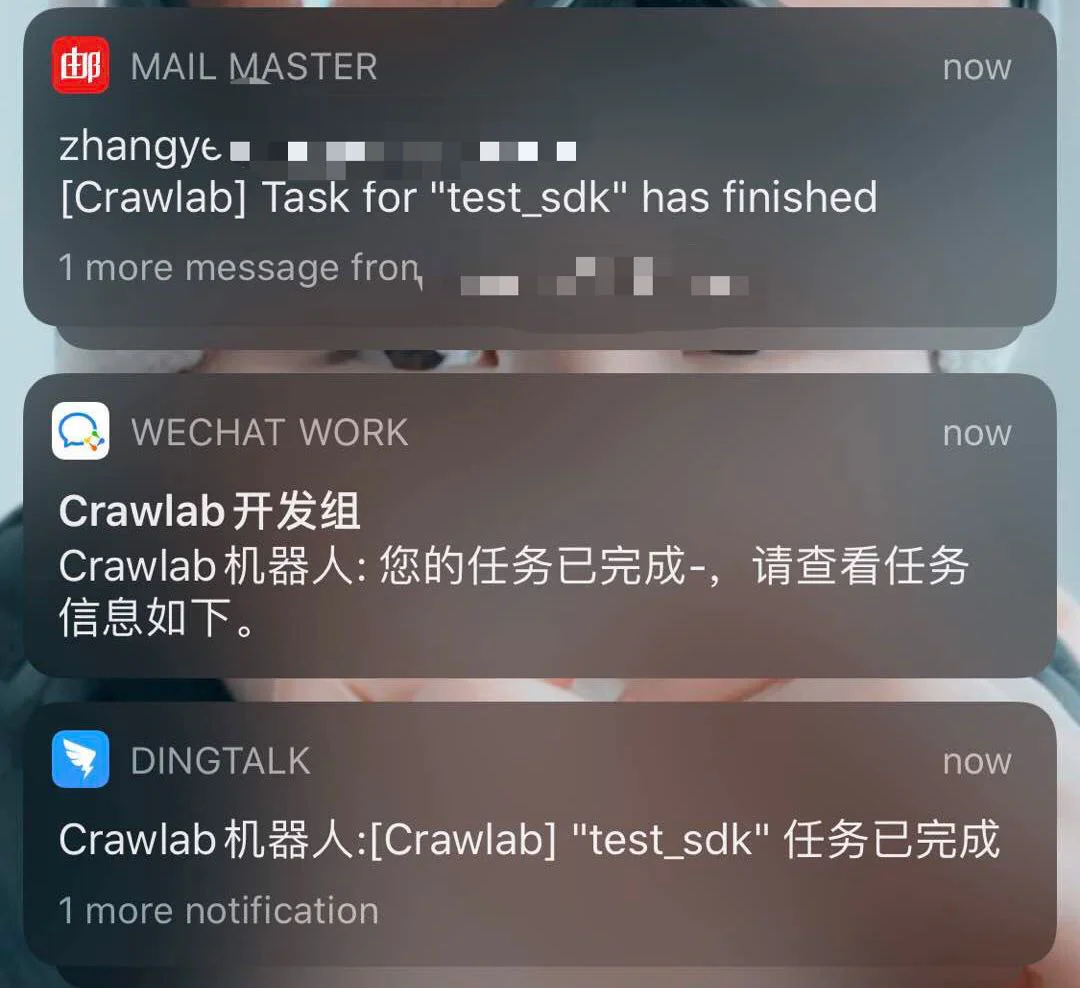

Notifications

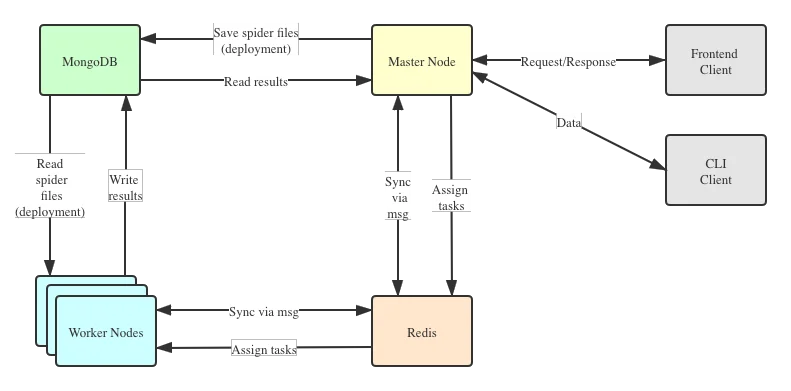

Architecture

The architecture of Crawlab is consisted of the Master Node and multiple Worker Nodes, and Redis and MongoDB databases which are mainly for nodes communication and data storage.

v0.3.0Master Node

The Master Node is the core of the Crawlab architecture. It is the center control system of Crawlab.

The Master Node offers below services:

- Crawling Task Coordination;

- Worker Node Management and Communication;

- Spider Deployment;

- Frontend and API Services;

- Task Execution (one can regard the Master Node as a Worker Node)

The Master Node communicates with the frontend app, and send crawling tasks to Worker Nodes. In the mean time, the Master Node synchronizes (deploys) spiders to Worker Nodes, via Redis and MongoDB GridFS.

Worker Node

PubSubMongoDB

MongoDB is the operational database of Crawlab. It stores data of nodes, spiders, tasks, schedules, etc. The MongoDB GridFS file system is the medium for the Master Node to store spider files and synchronize to the Worker Nodes.

Redis

HSETnodesFrontend

Frontend is a SPA based on Vue-Element-Admin. It has re-used many Element-UI components to support corresponding display.

Integration with Other Frameworks

helpercrawlab-sdkScrapy

settings.pyITEM_PIPELINESdictThen, start the Scrapy spider. After it's done, you should be able to see scraped results in Task Detail -> Result

General Python Spider

Please add below content to your spider files to save results.

Then, start the spider. After it's done, you should be able to see scraped results in Task Detail -> Result

Other Frameworks / Languages

CRAWLAB_TASK_IDCRAWLAB_COLLECTIONComparison with Other Frameworks

There are existing spider management frameworks. So why use Crawlab?

The reason is that most of the existing platforms are depending on Scrapyd, which limits the choice only within python and scrapy. Surely scrapy is a great web crawl framework, but it cannot do everything.

Crawlab is easy to use, general enough to adapt spiders in any language and any framework. It has also a beautiful frontend interface for users to manage spiders much more easily.

| Framework | Technology | Pros | Cons | Github Stats |

|---|---|---|---|---|

| Golang + Vue | Not limited to Scrapy, available for all programming languages and frameworks. Beautiful UI interface. Naturally support distributed spiders. Support spider management, task management, cron job, result export, analytics, notifications, configurable spiders, online code editor, etc. | Not yet support spider versioning |

|

|

| Python Flask + Vue | Beautiful UI interface, built-in Scrapy log parser, stats and graphs for task execution, support node management, cron job, mail notification, mobile. Full-feature spider management platform. | Not support spiders other than Scrapy. Limited performance because of Python Flask backend. |

|

|

| Python Django + Vue | Gerapy is built by web crawler guru Germey Cui. Simple installation and deployment. Beautiful UI interface. Support node management, code edit, configurable crawl rules, etc. | Again not support spiders other than Scrapy. A lot of bugs based on user feedback in v1.0. Look forward to improvement in v2.0 |

|

|

| Python Flask | Open-source Scrapyhub. Concise and simple UI interface. Support cron job. | Perhaps too simplified, not support pagination, not support node management, not support spiders other than Scrapy. |

|

Contributors

Community & Sponsorship

If you feel Crawlab could benefit your daily work or your company, please add the author's Wechat account noting "Crawlab" to enter the discussion group. Or you scan the Alipay QR code below to give us a reward to upgrade our teamwork software or buy a coffee.